How Much Traffic and Conversions Are Websites Really Getting from AI?

The artificial intelligence industry has spent the last eighteen months painting a picture of transformative change. According to technology evangelists and venture-backed startups, AI represents an existential threat to search engines, a revolutionary distribution channel, and an untapped source of website traffic. When you examine the actual data, however, a starkly different narrative emerges.

The Reality of AI-Driven Traffic

After analyzing many high-traffic websites with many AI citations/mentions, evidence suggests that AI systems contribute less than 1% of total website traffic across virtually all websites. This isn’t speculation based on limited samples – it’s a conclusion supported by web analytics data, server logs, and traffic attribution studies conducted across diverse industries. The figure remains consistent whether examining e-commerce platforms, SaaS companies, or content publishers.

This distinction matters because it contradicts the premise underlying much of the AI optimization industry. If AI were genuinely functioning as a meaningful traffic source, we would see measurable, directional shifts in analytics dashboards. Instead, what we observe is statistical noise – traffic spikes so minimal they fall within the normal variance of daily fluctuation.

The constraint isn’t subtle or debatable. Major AI applications, including ChatGPT, Claude, Gemini, and others, receive hundreds of millions of queries monthly. When you factor in that most AI outputs don’t include links, that citations occur inconsistently, and that users rarely click through to sources, the number becomes functionally irrelevant for business decision-making.

The Data Problem

Optimization companies selling AI traffic solutions face a credibility problem they’ve addressed through an unconventional strategy: they’ve abandoned rigorous measurement entirely.

Request specific attribution data from any company claiming to have optimized websites for AI traffic, and you’ll encounter the same response pattern. These firms cite proprietary methodologies, confidential client agreements, and “proprietary data” that conveniently cannot be shared publicly. When pressed for even anonymized case studies showing meaningful traffic increases, most organizations either decline or produce studies with structural problems so severe that they provide no actionable insight.

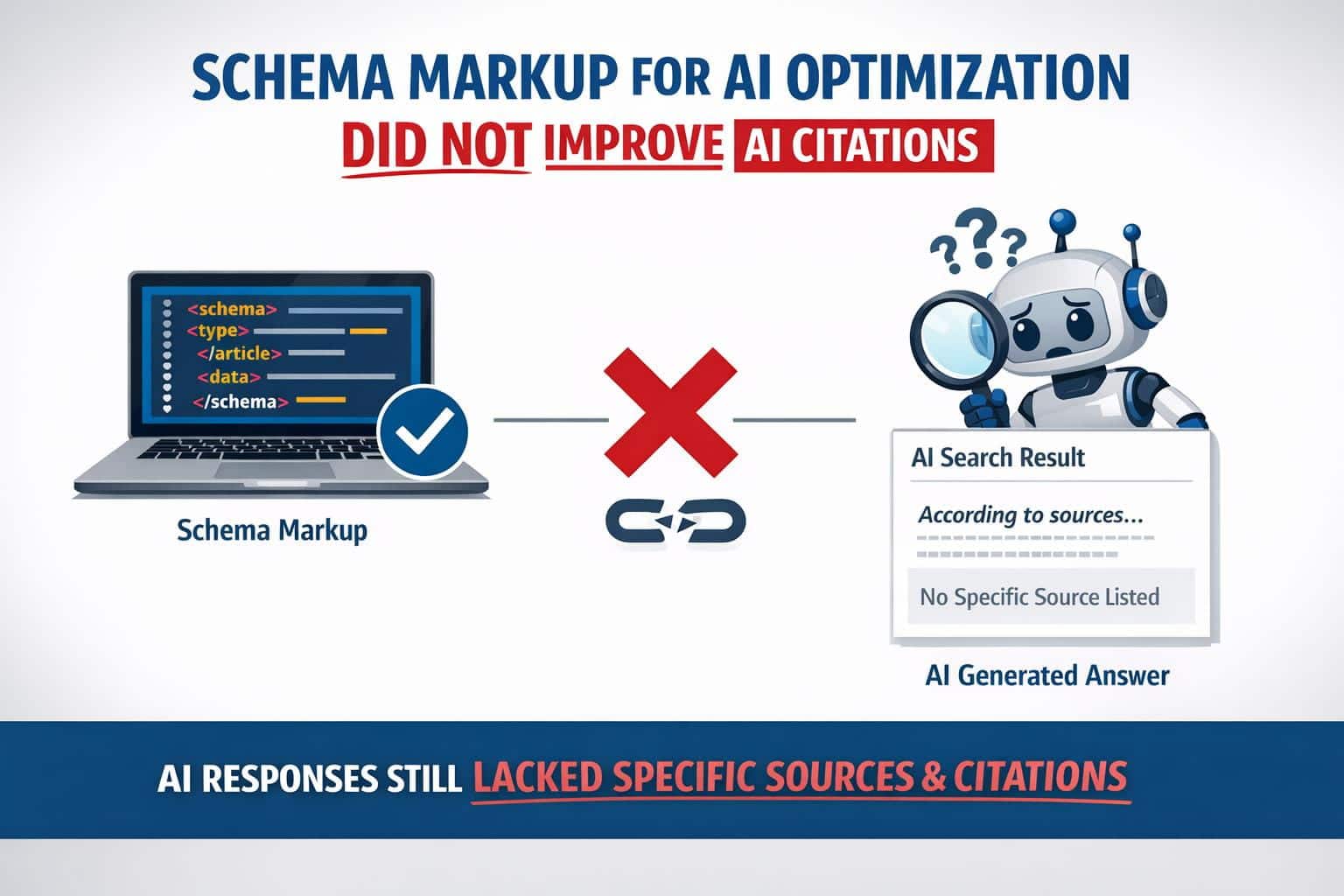

The published research emerging from these companies shares common characteristics. Sample sizes are small, often under fifty websites. Control groups are either absent or poorly designed. Attribution windows are unusually long, conflating AI traffic with organic search, direct traffic, and other sources. Most critically, the studies measure outputs that correlate weakly with actual business outcomes – citation counts rather than clicks, mentions rather than conversions, or indexing rates rather than traffic volume.

When independent researchers have examined these claims using publicly available data, the findings diverge significantly from vendor claims. The gap exists not because of methodology differences but because the original research was constructed to reach predetermined conclusions rather than to discover the truth.

The “Dark Social” Confusion

A narrative has emerged suggesting that AI traffic represents a form of “dark social” – website visits that occur through channels leaving no attribution trail. Under this framework, users receive information from AI systems, then visit websites directly without clicking links, thereby creating “dark” traffic that analytics platforms cannot measure.

If this dark social phenomenon were real, we would observe specific directional changes in website analytics. Direct traffic would increase relative to overall traffic. Referral traffic classifications would expand. Organic search traffic would show unexpected composition shifts. Conversion rate patterns would diverge from historical baselines in ways correlating with AI emergence and adoption.

None of these indicators have materialized at scale. Website operators report no anomalous direct traffic increases. Analytics platforms show referral traffic percentages within expected ranges. Organic search metrics follow predictable patterns unrelated to AI development. Most tellingly, websites publishing research on their actual AI-driven traffic report figures measured in fractions of daily volume, not in percentages meaningful to business strategy.

The dark social hypothesis functions primarily as an unfalsifiable explanation – when AI traffic fails to appear, proponents argue it appeared but remained invisible. This rhetorical move replaces empiricism with assumption.

Why AI Isn’t Replacing Search

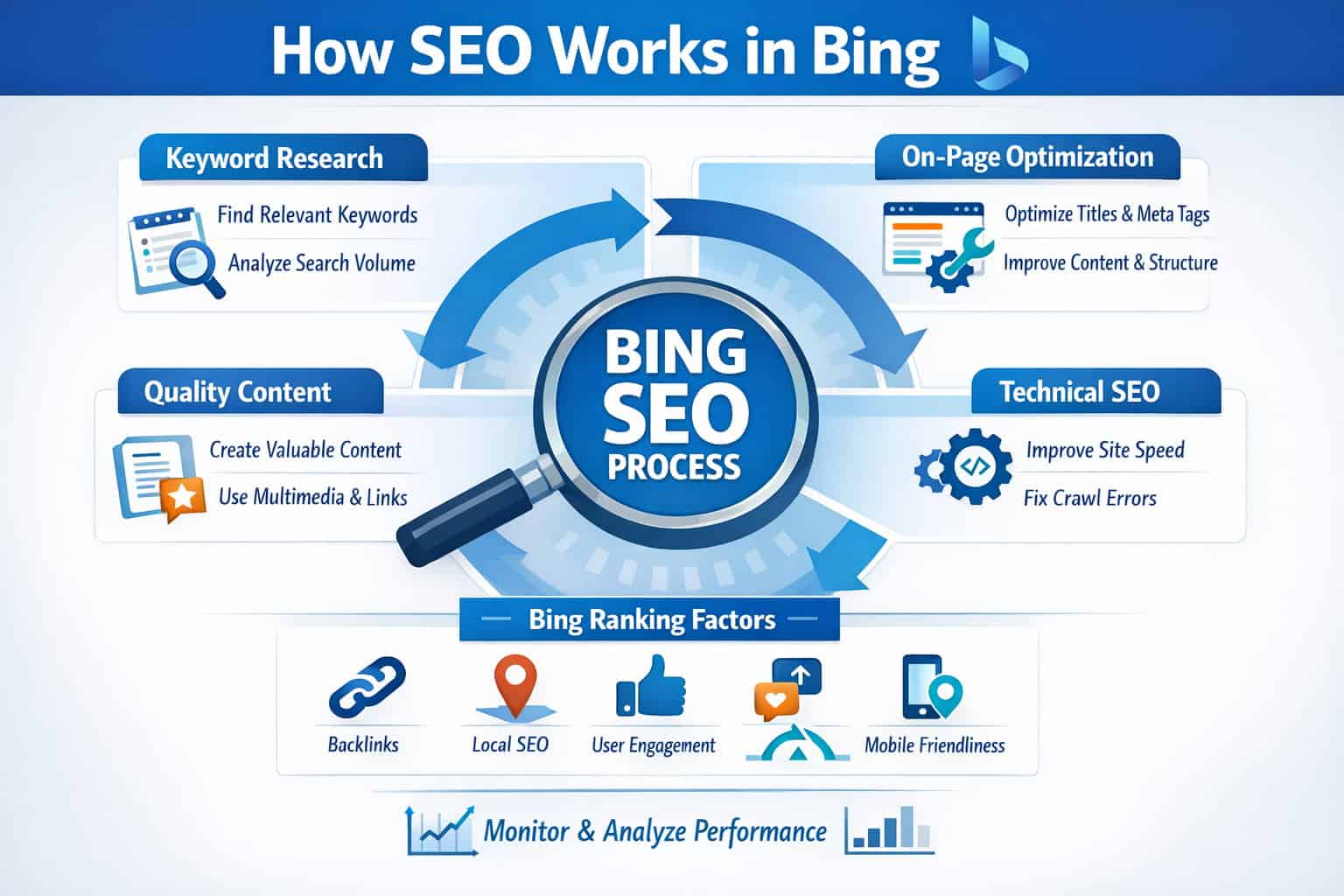

Foundational misunderstandings about AI capabilities have enabled exaggerated claims regarding search engine replacement. Conversational AI systems excel at information synthesis and natural language generation. They function poorly as discovery mechanisms for new, niche, or time-sensitive information.

Search engines operate through systematic indexing of the public web. This architecture enables discovery – users find resources they weren’t specifically seeking. AI systems, by contrast, work from training data frozen at specific points in time. They hallucinate with uncomfortable frequency. They cannot guarantee accuracy on factual queries. They don’t learn from individual searches or update to reflect new information. Most fundamentally, they provide no incentive mechanism for information discovery independent of queries.

Users still turn to search engines when they need current information, local results, specific product comparisons, or discovery of resources beyond their existing knowledge. These use cases comprise the majority of search activity. Conversational AI handles well-defined queries about established topics. It performs poorly on discovery queries, local searches, real-time information needs, and exploration.

The distinction proves critical for traffic projections. If search represented the only information-seeking behavior, replacement narratives would carry credibility. In reality, search addresses perhaps thirty to forty percent of information needs. For the remainder – direct navigation to known resources, social media discovery, email, internal site search, and other channels – search engines already face limited competition.

What AI Actually Does Well

The legitimate applications for AI in business operations cluster around two categories: code generation and process automation. AI systems competently handle structured tasks with clear inputs and outputs. They accelerate development cycles, reduce repetitive work, and improve productivity in specialized domains.

GitHub Copilot represents the clearest success case – developers use AI to generate boilerplate code, handle routine programming tasks, and accelerate feature development. This utility extends to other automation scenarios where structured, rule-based processes benefit from AI acceleration. Customer service automation, content moderation, and data processing tasks all show genuine ROI.

Traffic and conversion generation occupy an entirely different category. These outcomes depend on user behavior change, discovery, and persuasion – domains where AI systems provide limited value. An AI cannot make your content more discoverable. It cannot change user preferences or convince skeptics. It cannot replace distribution strategy or audience building.

Companies that have genuinely benefited from AI integration did so by deploying the technology in alignment with its actual capabilities. They improved internal processes, reduced operational friction, and maintained focus on proven traffic and conversion strategies. They did not attempt to substitute AI for search engine optimization, paid advertising, content strategy, or audience development.

The Vendor Incentive Structure

Understanding why AI traffic claims have proliferated requires examining the incentives operating on companies selling optimization services. When a new technology emerges, first-mover advantage accrues to organizations establishing themselves as category experts. The window remains open only briefly before the market saturates.

This dynamic creates intense pressure to make bold claims, establish market presence, and acquire customers before competition intensifies. Ironically, the more uncertain the actual impact of a technology, the greater the incentive to make confident assertions. Companies with genuine, measurable results need not work as hard to convince buyers. Companies with marginal or nonexistent results must make more compelling claims.

AI optimization represents precisely this scenario. The actual impact on traffic and conversions is small enough that honest positioning would struggle to command premium pricing. Therefore, positioning emphasizes transformative potential, cites early data that hasn’t held up to scrutiny, and references studies that, examined closely, contain significant methodological problems.

This isn’t necessarily dishonesty so much as it is standard market dynamics. Companies operating in high-uncertainty environments with significant competitive pressure frequently make claims that, while not technically false, shade optimistic. Clients expecting results within six months feel disappointed when modest metrics emerge. Vendors, anticipating this reaction, establish expectations at the highest credible level.

Measurement Challenges and Honest Uncertainty

A portion of the confusion around AI traffic stems from genuine measurement challenges rather than intentional deception. Attribution systems were built assuming specific traffic sources – search, social media, email, direct traffic, and referrals. AI represents a source that doesn’t fit neatly into these categories.

A user could receive information from an AI system, then visit a website through a different channel. Attribution systems would classify this as the channel visited, not the AI that inspired the visit. Conversely, a user might click a link within an AI system, which would generate traffic the system should capture but often doesn’t due to technical limitations.

These measurement gaps mean we cannot claim with absolute certainty that zero conversions originate from AI. What we can claim, with confidence, is that the volume is small enough to escape detection as a significant metric. When a traffic source becomes large enough to matter, it becomes large enough to measure.

Strategic Implications for Website Operators

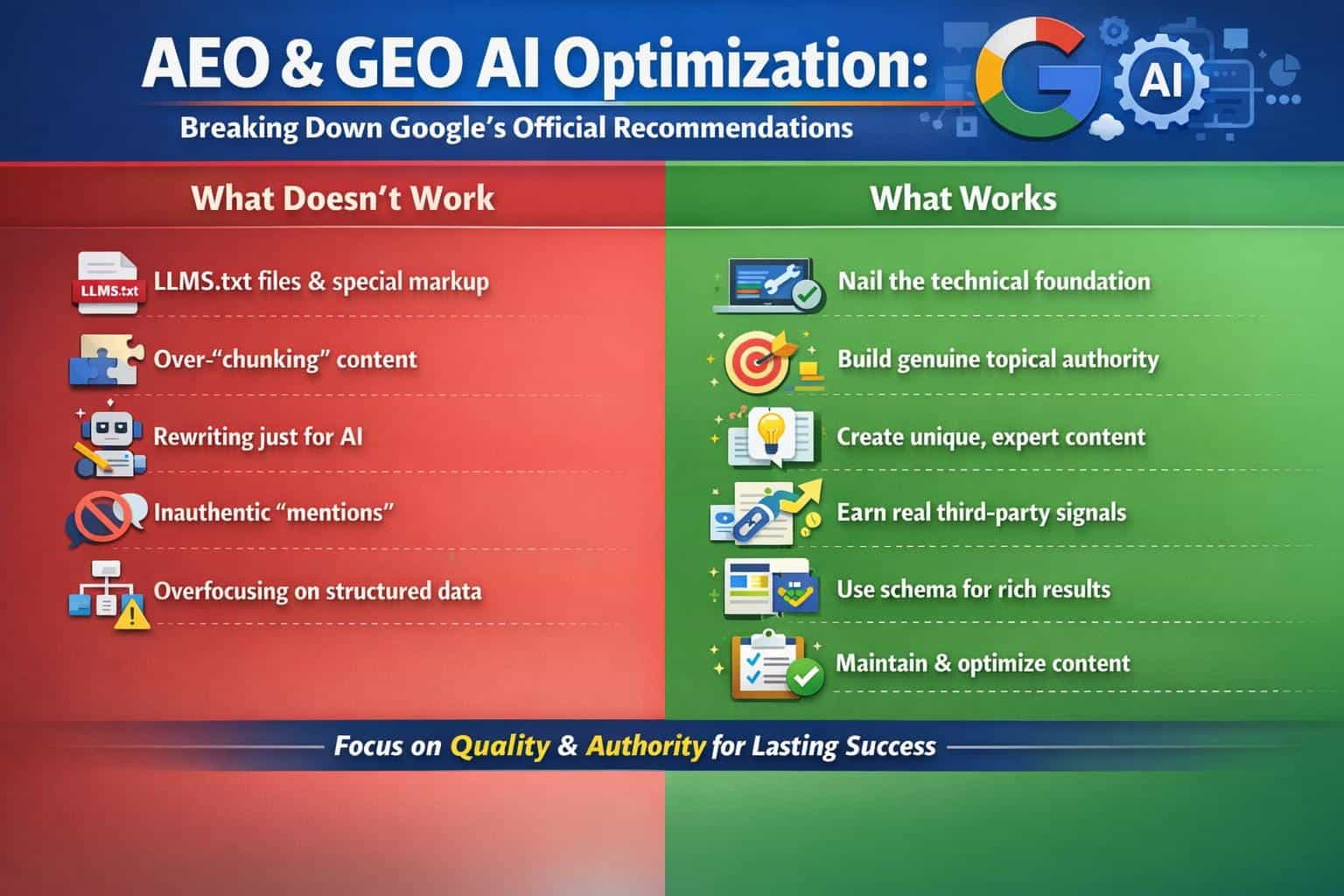

For companies making decisions about resource allocation, the implications of AI traffic’s current negligibility are significant. Time spent optimizing for AI mentions diverts effort from search engine optimization, content strategy, and audience development – channels with established, measurable ROI.

This doesn’t mean ignoring AI entirely. Monitoring the space for genuine changes makes sense. Experimenting with limited resources carries low risk. Building AI-optimization into content workflows, when minimal, can capture upside if impact increases substantially.

What the current evidence argues against is the reallocation of substantial budgets based on AI traffic projections. The risk-return profile doesn’t justify the investment. Better opportunities exist in channels with years of performance data, proven methodologies, and measurable results.

The Path Forward

As AI systems mature, the actual impact on website traffic may increase. Developers may improve citation functionality. Companies may create distribution mechanisms that more effectively drive users to source websites. Users may develop new discovery behaviors we haven’t yet encountered.

Until these changes materialize on an observable scale, however, treating AI as a primary traffic source represents a misallocation of resources. The honest assessment, supported by available data and independent analysis, is that AI currently drives less than one percent of website traffic for nearly all commercial websites. Most optimization efforts in this space generate marginal returns at best, and negative returns when they divert attention from higher-impact channels.

The gap between rhetoric and reality in the AI optimization space remains substantial. Narrowing that gap requires honest conversation about what current data actually shows – which is far less transformative than an entrepreneur would prefer to admit.